Mark Zuckerberg, CEO of Meta, attends a U.S. Senate bipartisan Artificial Intelligence Insight Forum on the U.S. Capitol in Washington, D.C., Sept. 13, 2023.

Stefani Reynolds | AFP | Getty Images

Meta stated Tuesday it’ll restrict the kind of content material that youngsters on Facebook and Instagram are capable of see, as the corporate faces mounting claims that its merchandise are addictive and dangerous to the psychological well-being of youthful customers.

In a weblog publish, Meta stated the brand new protections are designed “to give teens more age-appropriate experiences on our apps.” The updates will default teenage customers to essentially the most restrictive settings, stop these customers from looking about sure matters and immediate them to replace their Instagram privateness settings, the corporate stated.

Meta expects to finish the replace over the approaching weeks, it stated, maintaining teenagers beneath age 18 from seeing “content that discusses struggles with self-harm and eating disorders, or that includes restricted goods or nudity,” together with content material shared by an individual they comply with.

The change comes after a bipartisan group of 42 attorneys basic introduced in October that they are suing Meta, alleging that the corporate’s merchandise are hurting youngsters and contributing to psychological well being issues, together with physique dysmorphia and consuming problems.

“Kids and teenagers are suffering from record levels of poor mental health and social media companies like Meta are to blame,” New York Attorney General Letitia James stated in an announcement asserting the lawsuits. “Meta has profited from children’s pain by intentionally designing its platforms with manipulative features that make children addicted to their platforms while lowering their self-esteem.”

In November Senate subcommittee testimony, Meta whistleblower Arturo Bejar instructed lawmakers that the corporate was conscious of the harms its merchandise trigger to younger customers however didn’t take acceptable motion to treatment the issues.

Similar complaints have dogged the corporate since 2021, earlier than it modified its identify from Facebook to Meta. In September of that yr, an explosive Wall Street Journal report, primarily based on paperwork shared by whistleblower Francis Haugen, confirmed Facebook repeatedly discovered its social media platform Instagram was dangerous to many youngsters. Haugen later testified to a Senate panel that Facebook constantly places its personal income over customers’ well being and security, largely because of algorithms that steered customers towards high-engagement posts.

Amid the uproar, Facebook paused its work on an Instagram for youths service, which was being developed for youngsters ages 10 to 12. The firm hasn’t supplied an replace on its plans since.

Meta did not say what prompted the newest coverage change, however stated in Tuesday’s weblog publish that it often consults “with experts in adolescent development, psychology and mental health to help make our platforms safe and age-appropriate for young people, including improving our understanding of which types of content may be less appropriate for teens.”

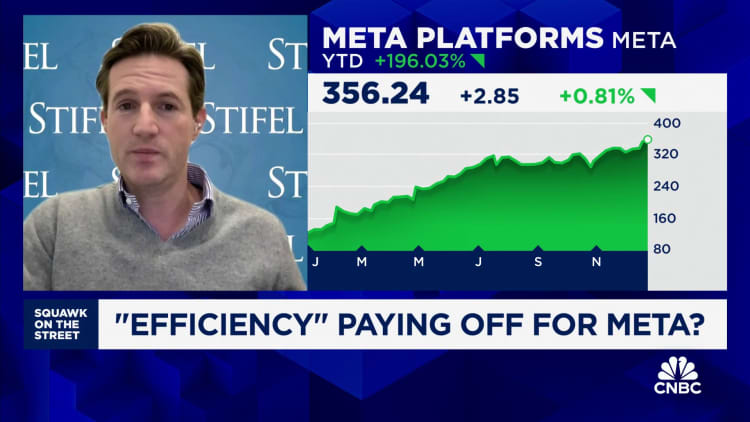

WATCH: 2024 also needs to be an excellent yr for Meta

Source: www.cnbc.com”