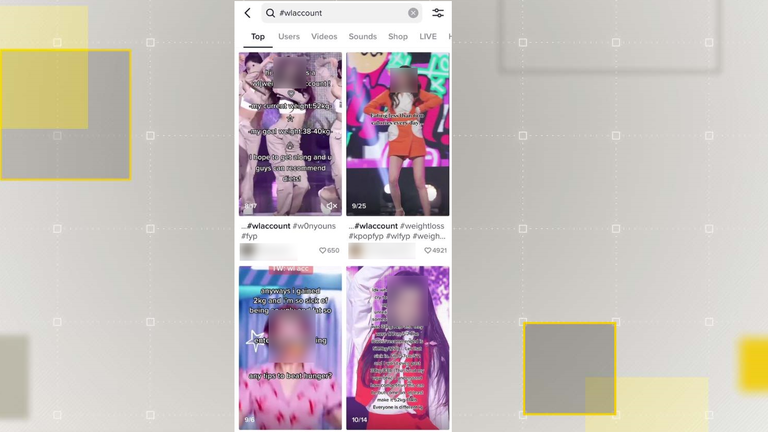

TikTok movies utilizing hashtags beforehand recognized as internet hosting eating-disorder content material are persevering with to draw views, new analysis by the Centre for Countering Digital Hate has discovered.

A December report by the marketing campaign group recognized “coded” hashtags the place customers may entry doubtlessly dangerous movies selling restrictive diets and so-called “thinspo” content material, designed to encourage dangerous weight reduction.

New evaluation of these hashtags by the organisation discovered that for the reason that examine, simply seven had been faraway from the platform and solely three carried a well being warning on the UK model of the app.

But TikTok mentioned it had eliminated content material which violates its guidelines, which don’t permit the promotion or glorification of consuming problems.

The Centre for Countering Digital Hate (CCDH) mentioned the hashtags it discovered nonetheless on the platform had amassed 1.6 billion extra views, which the UK’s main consuming dysfunction charity Beat has known as “extremely concerning”.

“There is no excuse for harmful hashtags and videos being on TikTok in the first place,” Andrew Radford, Beat’s Chief Executive mentioned.

“The company should immediately identify and remove damaging content as soon as it is uploaded,” he advised Sky News.

Content warning: this text comprises references to consuming problems.

TikTok’s neighborhood tips limit consuming disorder-related content material on its platform and this contains hashtags explicitly related to it.

But customers will usually make delicate edits to terminology to allow them to proceed posting doubtlessly dangerous materials about consuming problems with out being noticed by TikTok’s moderators.

‘Coded’ language to keep away from detection

In its December report, the CCDH recognized 56 TikTok hashtags utilizing “coded” language, below which it discovered doubtlessly dangerous consuming dysfunction content material.

The CCDH additionally discovered 35 of the hashtags contained a excessive focus of pro-eating dysfunction movies, whereas it mentioned 21 contained a mixture of dangerous content material and wholesome dialogue.

Among the fabric present in each classes had been movies selling unhealthy weight reduction, restrictive diets and “thinspo”.

In November, the views throughout these hashtags stood at 13.2 billion. When CCDH reviewed them in January, it discovered that the variety of views on movies utilizing the hashtags had grown to greater than 14.8 billion.

Since the unique examine, CCDH says seven of the hashtags it recognized had been faraway from the platform altogether.

Four of these hosted predominantly pro-eating dysfunction content material, whereas three contained each constructive and dangerous movies.

In the assessment, the CCDH discovered when accessed by US customers, 37 of the hashtags they recognized carried a security warning directing customers to the US’s main consuming dysfunction charity.

However, the identical assessment discovered that for UK customers, simply three of these hashtags carry the identical type of warning.

‘Outcry’ by dad and mom

“TikTok is clearly capable of adding warnings to English language content that might harm but is choosing not to implement this for English language content in the UK,” mentioned Imran Ahmed, CEO of the Centre for Countering Digital Hate.

“There can be no clearer example of the way the enforcement of purportedly universal rules of these platforms are actually implemented partially, selectively, and only when platforms feel under real pressure by governments,” he advised Sky News.

The new analysis additionally signifies that the general public accessing materials below these hashtags are younger.

Using TikTok’s personal knowledge analytics instrument, CCDH discovered that 91% of views on 21 of the hashtags got here from customers below the age of 24. This instrument, nevertheless, is proscribed as TikTok doesn’t embody knowledge for any customers below the age of 18.

“Despite an outcry from parents, politicians and the general public, three months later this content continues to grow and spread unchecked,” Mr Ahmed added.

“Every view represents a potential victim – someone whose mental health might be harmed by negative body image content, someone who might start restricting their diet to dangerously low levels,” he mentioned.

Following CCDH’s findings, a bunch of charities – together with the NSPCC, the Molly Russell Foundation and the US and UK arms of the American Psychological Foundation – have known as on TikTok to enhance its moderation insurance policies in a letter to its head of security, Eric Han.

Responding to the findings, a spokesperson for TikTok mentioned: “Our community guidelines are clear that we do not allow the promotion, normalisation or glorification of eating disorders, and we have removed content mentioned in this report that violates these rules.

“We are open to suggestions and scrutiny, and we search to interact constructively with companions who’ve experience on these complicated points, as we do with NGOs within the US and UK.”

The Data and Forensics workforce is a multi-skilled unit devoted to offering clear journalism from Sky News. We collect, analyse and visualise knowledge to inform data-driven tales. We mix conventional reporting expertise with superior evaluation of satellite tv for pc pictures, social media and different open supply data. Through multimedia storytelling we intention to raised clarify the world whereas additionally displaying how our journalism is completed.

Why knowledge journalism issues to Sky News

Source: information.sky.com”