Social media algorithms are nonetheless “pushing out harmful content to literally millions of young people” six years after Molly Russell’s loss of life, the schoolgirl’s father has mentioned.

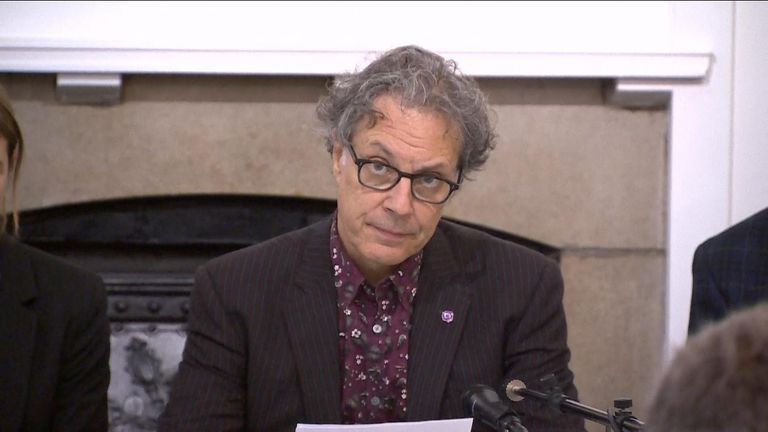

Ian Russell mentioned a brand new report by the the suicide prevention charity arrange in his daughter’s honour exhibits “a fundamental systemic failure” by tech giants “that will continue to cost young lives”.

Molly, who took her personal life, aged 14, in November 2017 after viewing posts associated to suicide, melancholy and anxiousness on-line, would have been celebrating her twenty first birthday this week.

Last September, a coroner dominated the schoolgirl, from Harrow, northwest London, had been “suffering from depression and the negative effects of online content”.

The Molly Rose Foundation mentioned its new analysis exhibits the “shocking scale and prevalence” of dangerous content material on Instagram, TikTok and Pinterest, six years on from her loss of life.

On TikTok, a number of the most considered posts that reference suicide, self-harm and extremely depressive content material, have been considered and appreciated greater than 1,000,000 occasions, in accordance with the charity.

The report mentioned younger individuals are routinely really useful massive volumes of dangerous content material fed by high-risk algorithms.

While issues round hashtags had been primarily centered on Instagram and TikTok, fears over algorithmic suggestions additionally utilized to Pinterest, it mentioned.

Mr Russell, who’s chair of trustees on the Molly Rose Foundation, mentioned: “This week, once we ought to be celebrating Molly’s twenty first birthday, it is saddening to see the horrifying scale of on-line hurt and the way little has modified on social media platforms since Molly’s loss of life.

“The longer tech companies fail to address the preventable harm they cause, the more inexcusable it becomes.

“Six years after Molly died, this should now be seen as a elementary systemic failure that can proceed to price younger lives.

“Just as Molly was overwhelmed by the volume of the dangerous content that bombarded her, we’ve found evidence of algorithms pushing out harmful content to literally millions of young people.

“This should cease. It is more and more exhausting to see the actions of tech corporations as something aside from a aware industrial choice to permit dangerous content material to attain astronomical attain, whereas overlooking the distress that’s monetised with dangerous posts being saved and probably ‘binge watched’ of their tens of hundreds.”

Read more:

What is the Online Safety Bill?

The charity’s report has been created with The Bright Initiative, analysing data from 1,181 of the most engaged-with posts on Instagram and TikTok that used well-known hashtags around suicide, self-harm and depression.

The foundation said it is concerned that the design and operation of social media platforms is increasing the risk for some youngsters because of the ease with which they could find potentially harmful content by searching hashtags or recommendations.

Online Safety Act

It also suggested commercial pressures may be increasing the risks as sites compete to grab the attention of young people and keep them scrolling through their feed.

Mr Russell said the findings highlighted the importance of the new Online Safety Act and called for the new online safety regulator Ofcom to be “daring” in the way it holds social media corporations to account beneath the brand new legal guidelines.

The Act duties tech corporations with defending kids from damaging content material and Ofcom will publish guidelines within the subsequent few months across the promotion of fabric associated to suicide and self-harm, with every new code requiring parliamentary approval earlier than it’s put in place.

A Meta spokesperson mentioned: “We want teens to have safe, age-appropriate experiences on Instagram, and have worked closely with experts to develop our approach to suicide and self-harm content, which aims to strike the important balance between preventing people seeing sensitive content while giving people space to talk about their own experiences and find support.”

They mentioned greater than 30 instruments have been constructed, together with a management to restrict the kind of content material youngsters are really useful, and the corporate will announce additional measures quickly.

TikTok and Pinterest have additionally been contacted for remark.

Source: information.sky.com”